On-Premise Deployment of Open-Source LLM

Secure AI with zero external data exposure

Client

Leading Manufacturer

Region

Global

Key Outcomes

Business Challenge

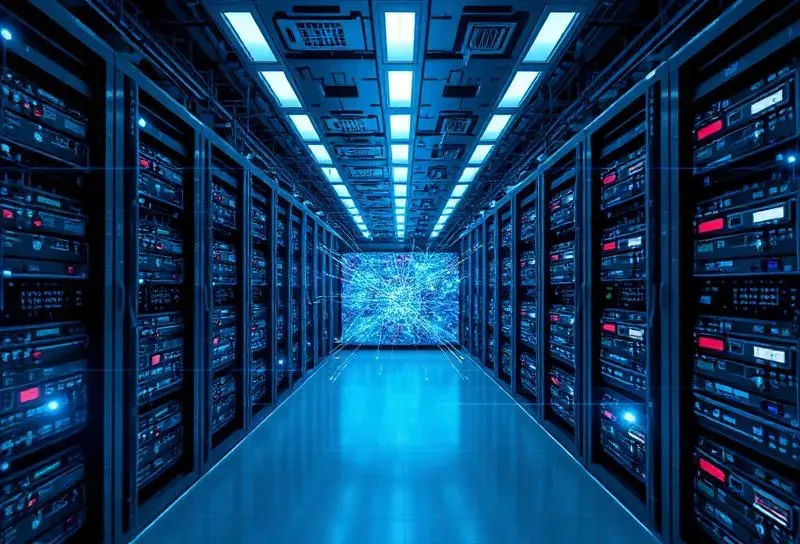

The client needed to deploy Generative AI across internal teams but could not use cloud-based LLM APIs due to data sensitivity, compliance requirements, and long-term cost concerns. They required full control over data, models, and infrastructure.

Solution Overview

DIATOZ designed and deployed a fully on-premise LLM solution using open-source models hosted on dedicated GPU infrastructure. Key elements included secure on-prem GPU deployment, custom model fine-tuning on enterprise data, role-based access control, and Dockerized, scalable architecture.

Architecture & Engineering

Technology Stack

Business Impact

Why DIATOZ

Deep expertise in private AI infrastructure and enterprise security requirements.

Related Case Studies

Explore similar projects in Manufacturing

Have a Similar Challenge?

Let's discuss how we can apply our expertise to solve your unique business problems.

We use cookies to enhance your browsing experience and analyze site traffic. By clicking "Accept", you consent to our use of cookies.

Learn more in our Privacy Policy