Manufacturing

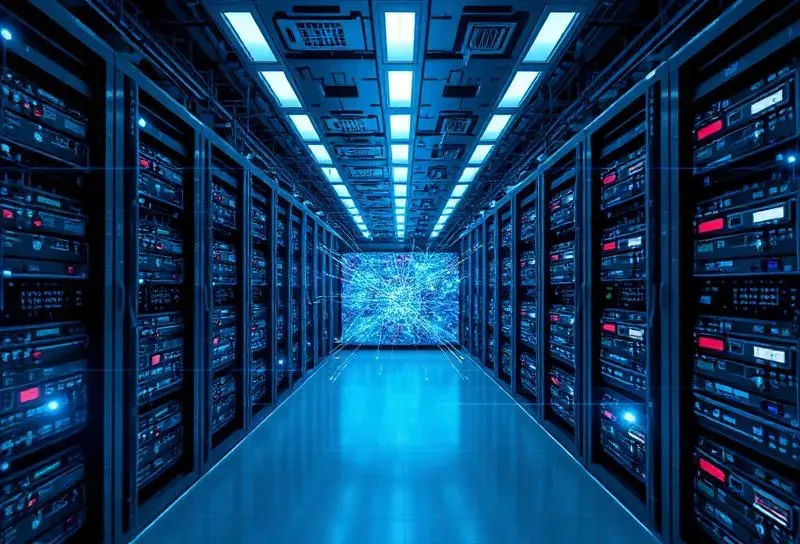

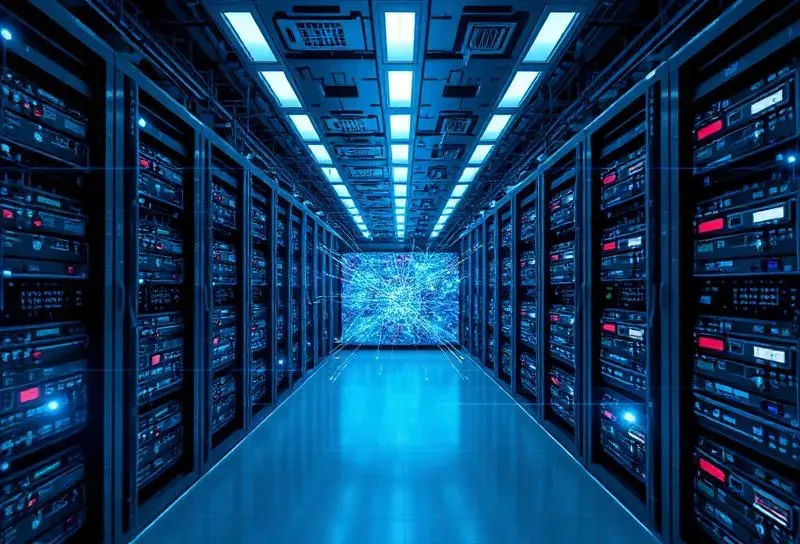

ManufacturingOn-Premise LLM Deployment

Deployed on-prem LLM for secure enterprise AI with zero external data exposure.

Trusted by enterprises and fast-growing innovators across industries

From AI strategy to deployment, we deliver end-to-end solutions that transform enterprises across FinTech, Telecom, Manufacturing, and Healthcare.

Private AI infrastructure, on-prem LLM deployment, AI copilots & assistants, intelligent document systems, and predictive analytics.

SaaS & enterprise platforms, cloud-native microservices, high-scale real-time systems, and security-first architecture.

MuleSoft & API-led connectivity, Salesforce & core banking integration, event-driven architectures, and workflow automation.

Spark, Kafka, IoT pipelines, workforce & operational analytics, real-time dashboards, and decision intelligence systems.

Our proven methodology ensures enterprise-grade quality at every stage-from discovery through continuous optimization.

Deep-dive analysis

Solution design

Agile development

DevSecOps

Cloud deployment

Continuous improvement

Manufacturing

ManufacturingDeployed on-prem LLM for secure enterprise AI with zero external data exposure.

Retail & E-Commerce

Retail & E-CommerceBuilt high-throughput order processing system with real-time inventory sync across warehouses.

Telecom

TelecomImplemented zero-trust security architecture for edge computing infrastructure.

We work across complex, regulated, and high-scale environments-bringing deep industry expertise to every engagement.

Core banking, payments, lending platforms, and regulatory compliance solutions.

Edge computing, network optimization, and zero-trust security architectures.

IoT integration, predictive maintenance, and smart factory solutions.

Omnichannel platforms, real-time inventory, and fulfillment automation.

Clinical data systems, compliance automation, and patient engagement platforms.

Fleet tracking, route optimization, and warehouse management systems.

Where product thinking meets AI innovation-solutions built from deep experience solving complex business challenges.

AI-Powered Work Platform for Growing Companies

Replace scattered tools with one AI-powered workspace that helps CEOs and CTOs execute faster, gain real-time visibility, and scale teams with confidence as they grow from 50 to 500+ employees.

Learn MoreFeatured

Supervisory Intelligence Platform for Industrial Operations

Connect ERP, MES, CMMS, SCADA, and IoT data to generate AI-driven operational intelligence. Valtren is the supervisory intelligence layer for industrial operations. It correlates operational signals across systems, so recurring failures drop and RCA gets faster.

Learn MoreJobs, Hiring, Recruitment

We are on a mission to bring job seekers and employers together to help them find one another seamlessly.

Learn MoreAI-Powered Customer Success Platform

Pulse unifies health scores, AI insights, and automated playbooks to help Customer Success teams prevent churn and drive expansion — all in one command center.

Learn MoreAI-Powered Yard Management

FlowYard gives ports, logistics yards, and OEMs full visibility into vehicle movements, slot utilization, and operations — powered by AI that learns your patterns.

Learn MoreFrom global enterprises and government bodies to innovative startups, we deliver transformative solutions across industries and continents.

Recognized by leading industry platforms

CIO Insider

Startup of the Year 2020

Indian Achievers' Award

Promising Company

Prime Insights

30 Most Trusted Brands

Innovative Zone

Company in Focus

Hear from the enterprises and innovators who've partnered with DIATOZ to transform their digital capabilities.

"My experience with DIATOZ can be summarized in a few sentences - "Commitment to the cause under any circumstances, easy adaptability to new technologies, a young team that shows remarkable maturity in the way they address the solution at hand. I have worked with them on several critical deliveries and continue to work with them on various initiatives of ours and it has always been a pleasure.""

"We are very impressed with the progress and the way you question us to improve the product. We are excited more now."

"It was a really great experience while working with DIATOZ. We found that they have a great ability to articulate project requirements, problem comprehension and identifying high level building blocks. At the same time they provide a good design to take care Non-Functional Requirements like Scalability, Fault-Tolerance, High Availability, Load balance, Application Level Security etc. We really liked the prompt action by them as per the urgency of the release and even really appreciate their post release support."

From AI strategy to production-grade systems, DIATOZ partners with you to turn ambitious ideas into measurable business outcomes.

We use cookies to enhance your browsing experience and analyze site traffic. By clicking "Accept", you consent to our use of cookies.

Learn more in our Privacy Policy